Integration runtime is also knows as IR, is the one who provides the computer resources for the data transfer activities and for dispatching the data transfer activities in azure data factory. Integration runtime is the heart of the azure data factory.

In Azure data factory the pipeline is made up of activities. An activity is represents some action that need to be performed. This action could be a data transfer which acquired some execution or it will be dispatch action. Integration runtime provides the area where this activity can execute.

Integration runtime types

There are 3 types of the integration runtime available in the Azure data factory. We can choose based upon our requirement the specific integration runtime best fitted in specific scenario. The three types are :

- Azure IR

- Self-hosted

- Azure-SSIS

Azure Integration Runtime

As the name suggested azure integration runtime is the runtime which is managed by the azure itself. Azure IR represents the infrastructure which is installed, configured, managed and maintained by the azure itself. Now as the infrastructure is managed by the azure it can’t be used to connect to your on premise data sources. Whenever you create the data factory account and create any linked services you will get one IR by default and this is called AutoResolveIntegrationRuntime.

When you create the azure data factory you mentioned the region along with it. This region specifies where the meta data of the azure data factory would be saved. This is irrespective of the which data source and from which region you are accessing.

For example if you have created the adf account in the US East and you have data source in US West region, then still it is completely ok and data transfer would be possible.

Beauty of the AutoResolveIntegrationRuntime is that it will automatically try to run the activities in the same region if possible or close to the region of the sink data source.

This helps in executing in the most performant way possible.

Azure IR location

When you create the azure integration runtime you can specify the location of it. So when the activity runs or dispatch , it will be done in that specific region of the IR.

However if you use the auto resolve azure integartion runtime in public netowrk then azure will try to auto detect the sink data source region and will try to use IR in the same region.

You can set a certain location of an Azure IR, in which case the activity execution or dispatch will happen in that specific region.

How to create Azure Integration Runtimes :

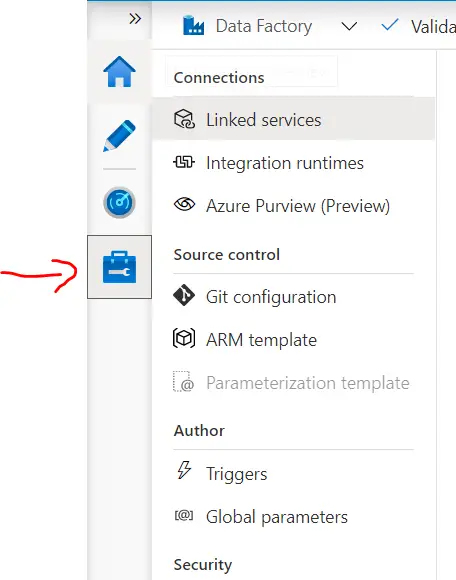

For creating the integration runtime go to the Manage Tab in ADF :

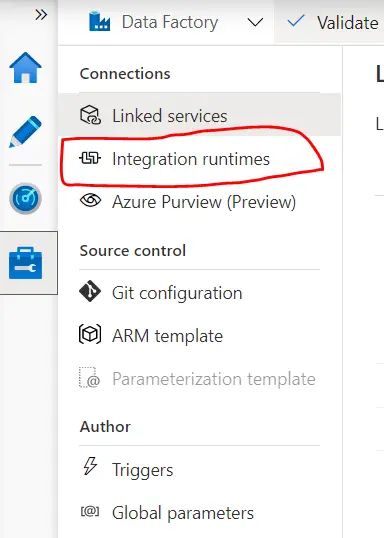

Click on the integration runtime :

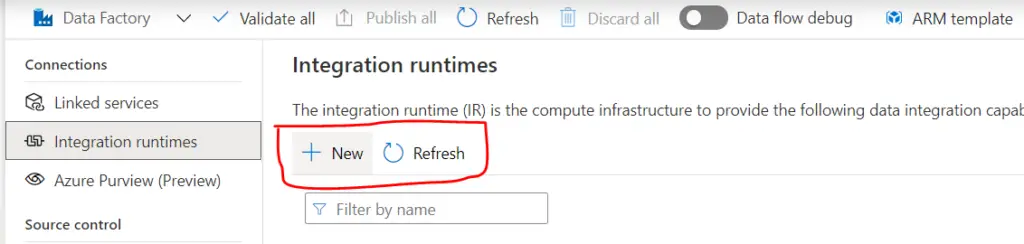

Click on New:

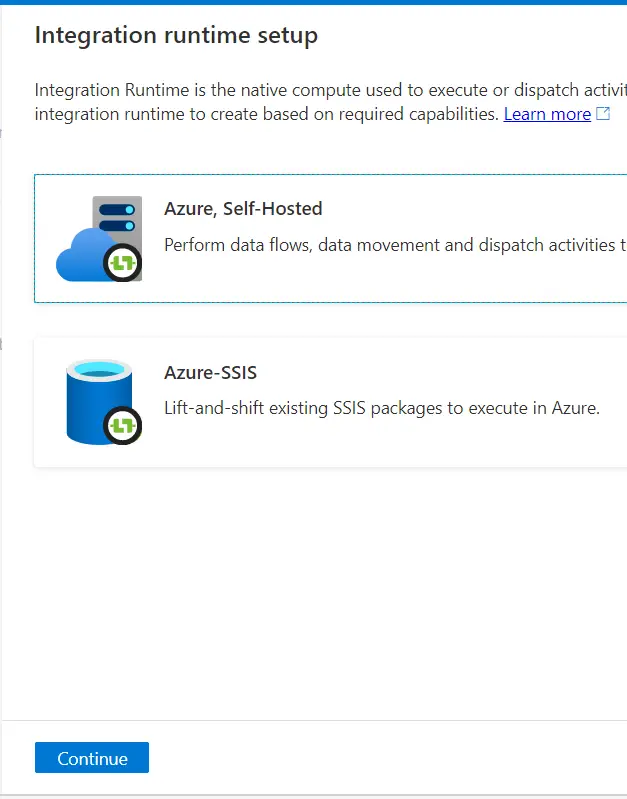

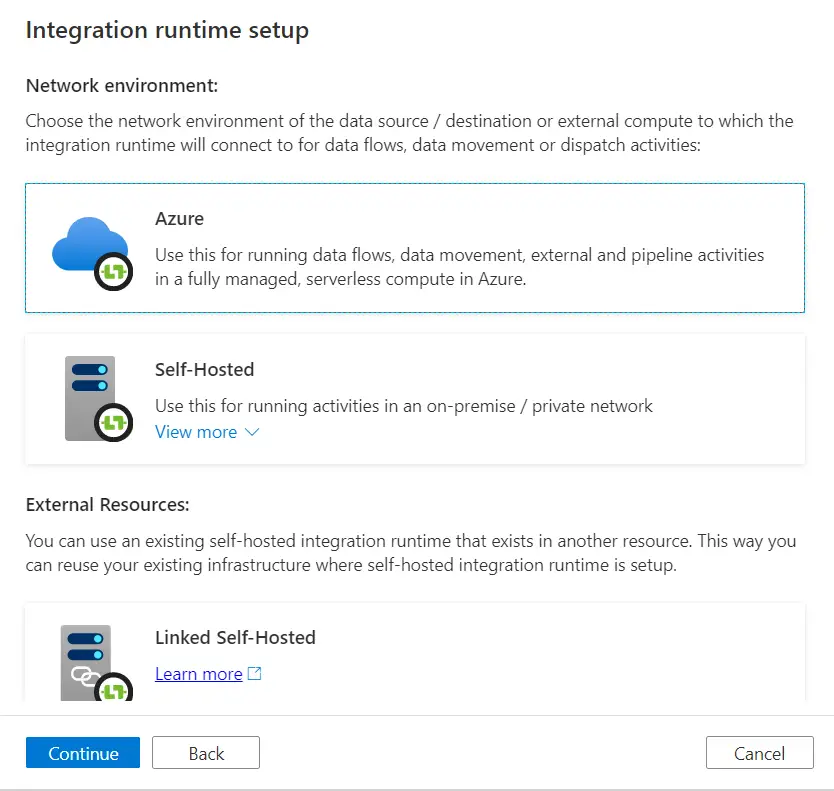

You will see options, selected the azure, self hosted :

Then select the Azure and click continue:

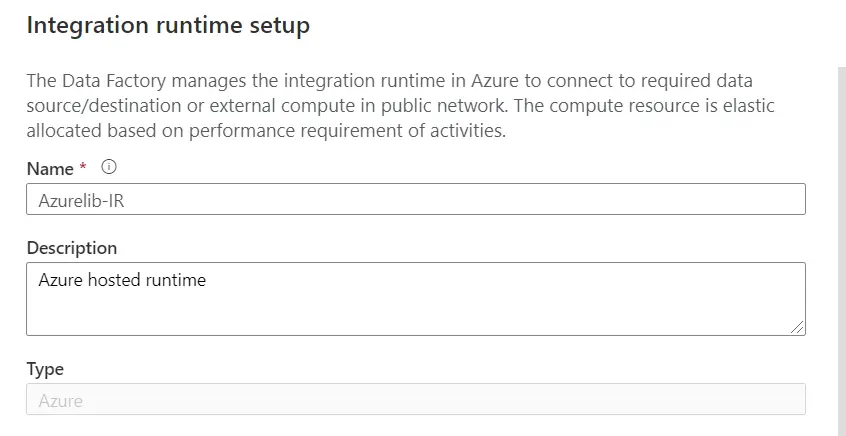

Provide the IR name and description. In the type you will the Azure by default.

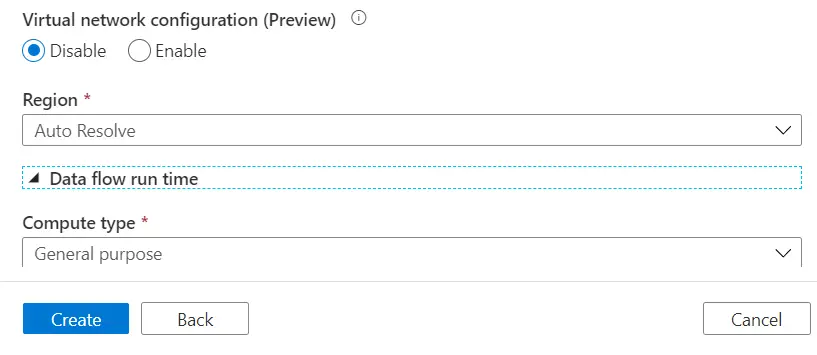

Now it will ask you to select the region. Auto resolve will automatically choose the IR based on the sink region. Else you can choose any specific region. For now lets select the Auto resolve.

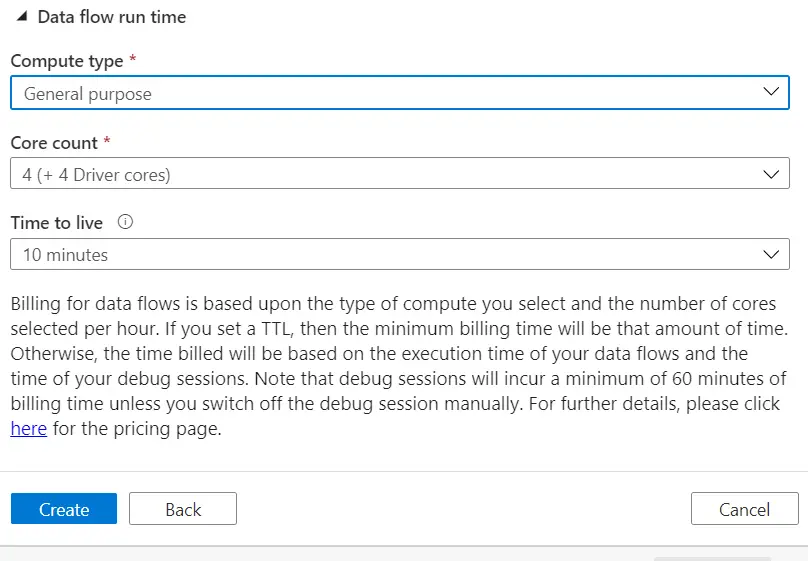

You can also provide the details for Data flow as per requirement or else keep default.

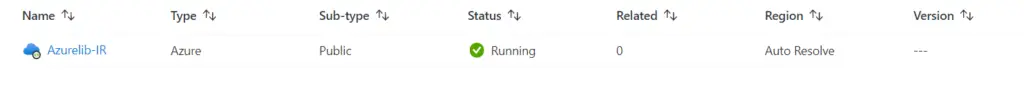

You can see Azure managed IR created :

Self Hosted Integration Runtimes

Self hosted integration runtime as the name suggested, is the IR managed by you itself rather than azure. This will make you responsible for the installation, configuration, maintenance, installing updates and scaling. Now as you host the IR , it can access the on premises network as well.

Azure-SSIS Integration Runtimes

As the name suggested the azure-SSIS integration runtimes are actually the set of vm running the SQL Server Integration Services (SSIS) engine, managed by Microsoft. Again the responsibility of the installation, maintenance, are of azure only. Azure Data Factory uses azure-SSIS integration runtime for executing SSIS packages.