Lookup activity in adf pipeline is generally used for configuration lookup purpose. It has the source dataset. Lookup activity used to pull the data from source dataset and keep it as the output of the activity. Output of the lookup activity generally used further in the pipeline for making some decision, configuration accordingly.

You can say that lookup activity adf pipeline is just for fetching the data. How you will use this data will totally depends on your pipeline logic. You can fetch first row only or you can fetch the entire rows based on your query or dataset.

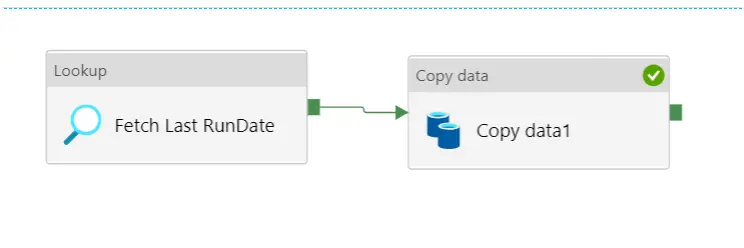

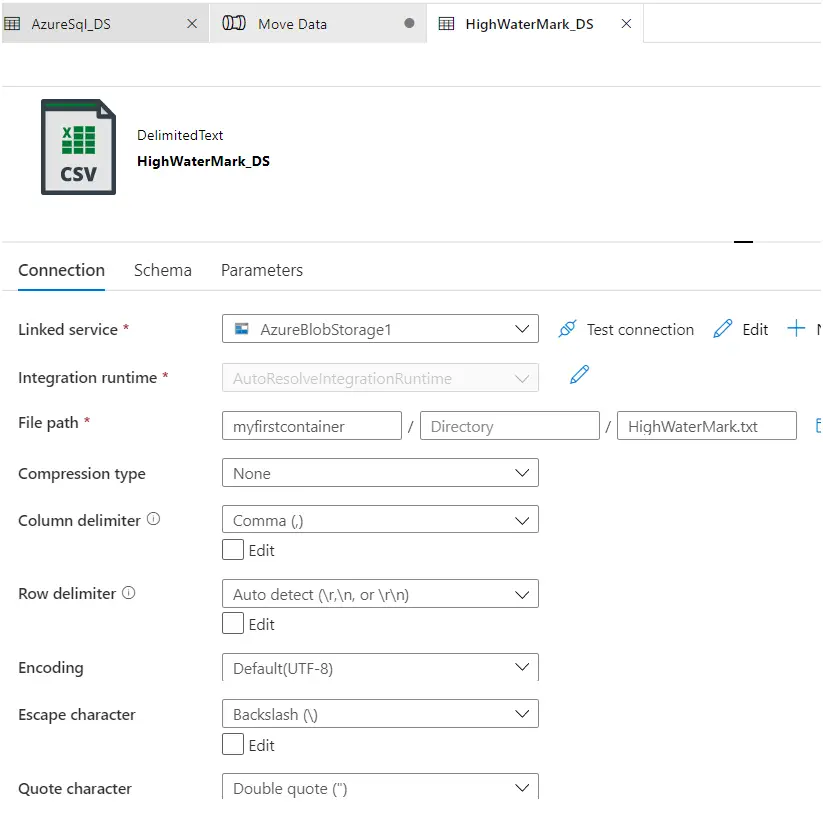

Example of the lookup activity could be : Lets assume we want to run a pipeline for incremental data load. We want to have copy activity which will pull the data from source system based on the last fetched date. Now the last fetched date we are saving inside the HighWaterMark.txt file. Here lookup activity will read the HighWaterMark.txt data and then based on the date copy activity will fetch the data.

How to create Azure data factory account

Copy activity Azure data factory with example

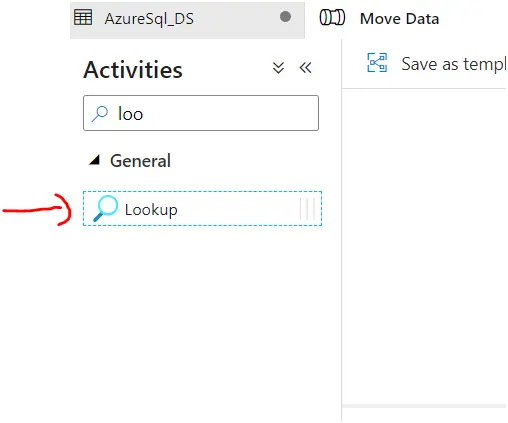

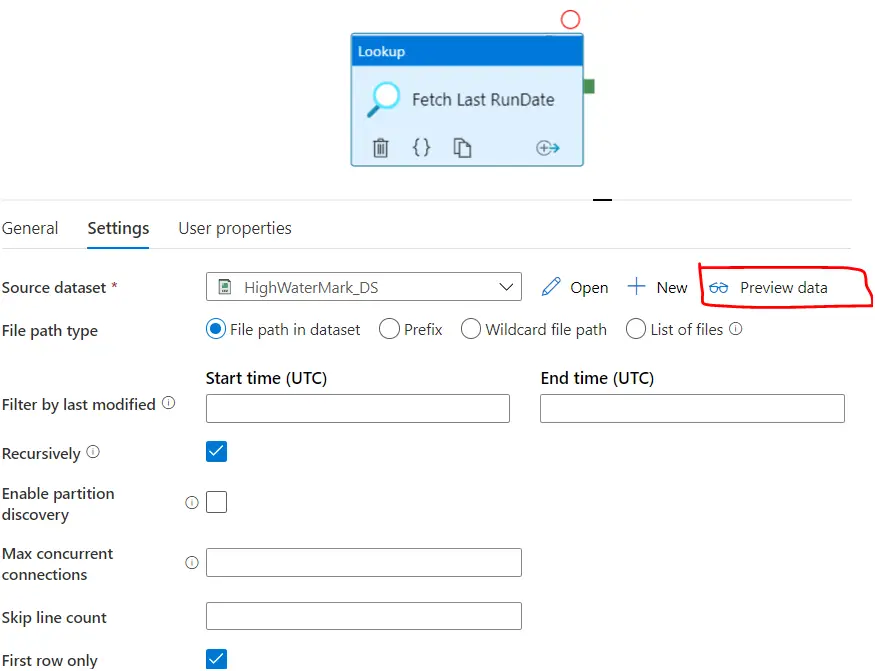

Steps to use lookup activity :

Drag and drop the lookup activity from the activity tab to data pipeline area.

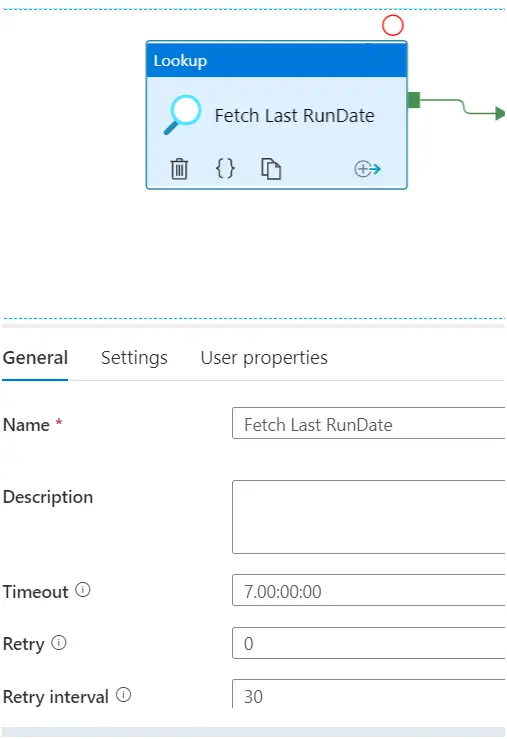

Provide the lookup activity name and description :

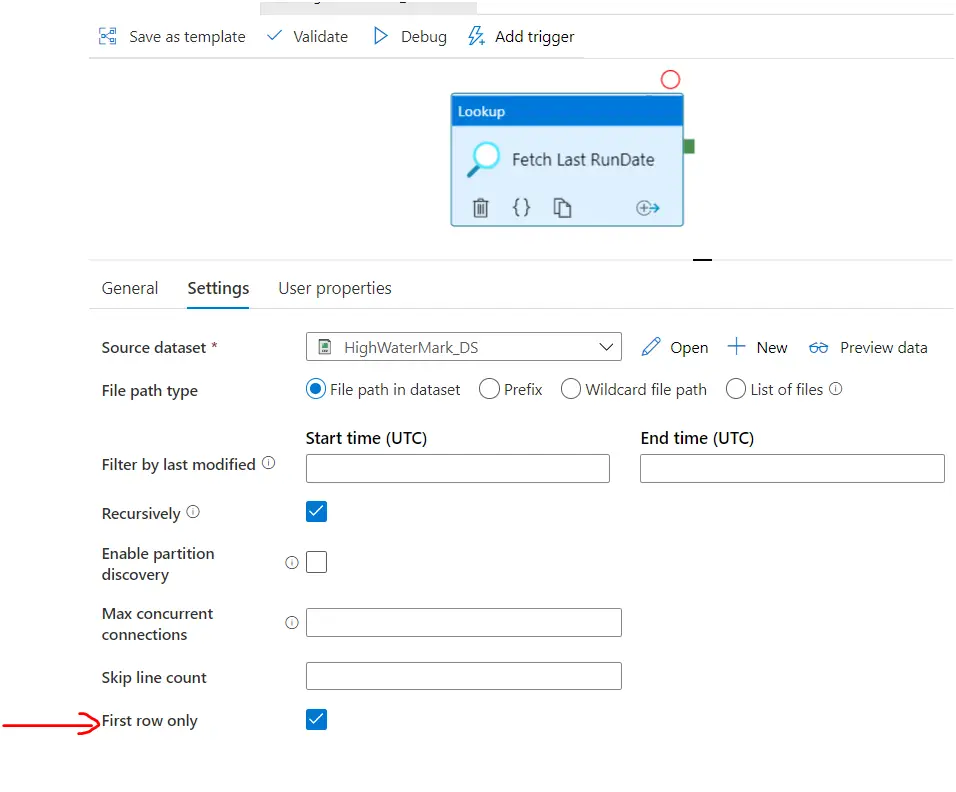

We have selected the ‘First Row Only’ while creating the dataset.

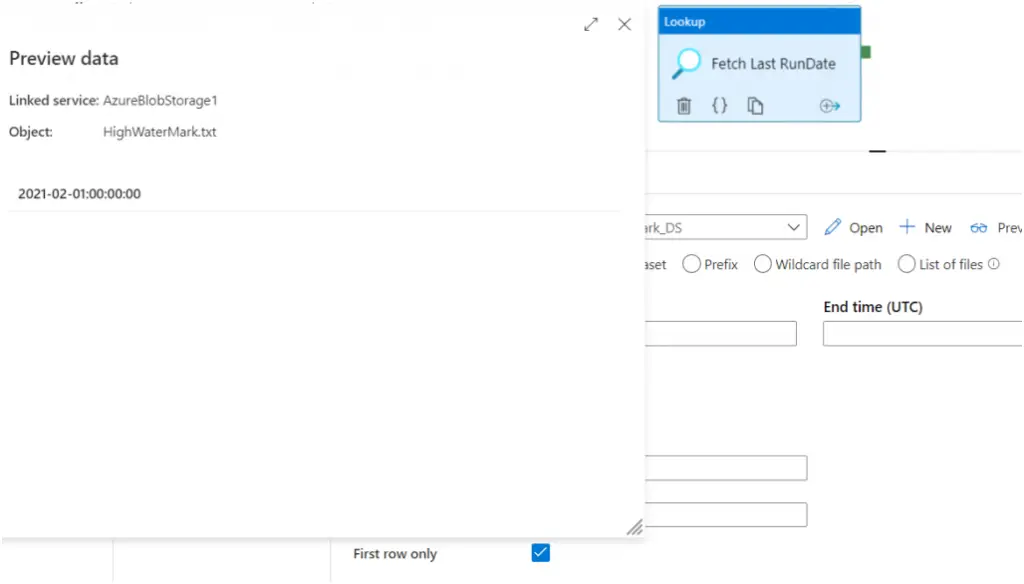

Now lets click on preview to see :

Preview data looks like this :

Now you can use it as input to the next acitivity:

Using : @activity(‘activityName‘).output

Example in our case : @activity(‘activityName‘).output

Special Case : In some cases in the lookup activity you want to return the many rows instead of the single row. Here you want to use these list of rows as input to the next next activity. In those cases while creating the dataset uncheck First row only option. So that activity output will contains all the rows.

Summary : That’s how you can create lookup activity in adf pipeline. Lookup activity just provide you the value as the output, its up to you how you want to use. It is kind of the activity uses as the helping activity in the pipeline.