In this lesson 7 of our Azure Spark tutorial series I will take you through Spark SQL detailed understanding of concepts with practical examples. I will also take you through how you can leverage your SQL knowledge and power of spark spark sql to solve complex business problem statement. You can use spark SQL both in Scala and python language. In case you haven’t gone through my first Lesson 1 of Azure Databricks tutorial, I would highly recommend going to lesson 1 to understand the Azure Databricks from scratch. For creating your first Azure Databricks free trial account follow this link : Create Azure Databricks Account. Let’s dive into the tutorial now.

- Azure Databricks Lesson 1

- Azure Databricks Lesson 2

- Azure Databricks Lesson 3

- Azure Databricks Lesson 4

- Azure Databricks Lesson 5

- Azure Databricks Lesson 6

- Azure Databricks Lesson 7

Supporting Files

Spark SQL in Azure Databricks

Since Spark 2.0, spark has launched one consolidated object SparkSession, which combines SQLContext capability inside the SparkSesion object itself. Just to reiterate the point SparkSession object automatically available in the Azure Databricks Notebook with name spark. We don’t need to create it. functionality: just import the class and create an instance in your code.

You can use the spark sql using the ‘spark.sql()’.

We can create the view out of dataframes using the createOrReplaceTempView() function. For example:

df = spark.read.csv('/FileStore/tables/Order-2.csv', header='true', inferSchema='true')

df.createOrReplaceTempView("OrderView")Now once view has been created on the dataframes then you can write your logic using the spark sql as follows:

df_all = spark.sql("select * from OrderView")

df_all.show()Why you should use Spark SQL with Dataframes

Advantages of the using the Spark SQL with Dataframes are as follows:

- You can take the benefit of your SQL skills by creating the views from dataframes and then start writing your business logic in terms of SQL rather than complex spark functions.

- Whether you write in terms of spark functions or using the SQL query you can still leverage the power of spark.

- Logic written in the SQL is easy to understand for anyone who see your notebook.

- Easy to maintain code.

Compare the spark sql code and plain dataframe transformation function with example

| Using Spark Transformation | Using Spark SQL |

| df.where(“UnitsSold>3000”) .orderBy(“Country”).show() | df.createOrReplaceTempView(“Order”) spark.sql(“select * from Order where UnitsSold>3000 order by Country”).show() |

Azure Databricks Spark SQL Tables and Views

Yes you read it right. In Azure Databricks or in Spark we can create the tables and view just like we do in the normal relational database. Though Spark or Databricks is not a database and there is lot of difference in the way tables are managed in Databricks compared to relationa database. Let me explain in you detail.

- In Apache Spark, pyspark or Databricks (AWS, Azure) we can create the tables. Tables structure i.e. the metadata of the table ( table name, column details, partition, physical location where the actual data stored) are stored in a central metastore.

- By default spark uses the hive metastore which is located at /user/hive/warehouse. This contains all the information about all the tables. Its a configurable path and can be changed.

- Spark table doesn’t support the update or transactions.

- Spark table doesn’t support the foreign key constraints like normal table.

- Under the hood spark tables are files stored either in HDFS, S3, ADLS or some other supported file system.

What are the different types of the tables in spark Databricks?

There are basically two types of table which you can create in the Spark, Azure Databricks.

- Managed Table or Internal Table

- Unmanaged or External Table

Managed Table in Databricks

In the managed table you basically manage the table schema (i.e. structure) as well as the data. For example the normal tables which you create in relation database like Oracle or MySQL are managed table only. Here your table hold the data actually and as soon as you delete the table your data will also get deleted.

External Table in Databricks

In the external table you basically only maintain the table schema (i.e. structure) and data will be stored or hold by someone else. You will only point to the location where the data is saved. Once you delete the external table your data won’t get deleted because you don’t store the data inside the table. You were only pointing to it. For example you can think it as the view in the relation database like Oracle or MySQL, where once you delete the view data won’t get deleted.

Creating SQL Databases and Tables in Spark Azure Databricks

Tables always stored inside the database. By default, Azure Databricks creates tables under the default database. In case you want to store the table under your own database name then you have to create your own database. You can execute a SQL command from

your Spark application or notebook to create the database.

//Works in both SCALA or python pySpark

spark.sql("CREATE DATABASE azurelib_db")

spark.sql("USE azurelib_db")

Once the database has been created you have to executed USE database_name SQL command to change from default database to respective database. Now whatever SQL operation you do it will be corresponding to this database only

Creating a managed table

Creating a managed table is quite similar to the creating table in normal SQL. You can use the following SQL syntax to create the table.

//Works in both SCALA or python pySpark

spark.sql("CREATE TABLE employee (name STRING, emp_id INT,salary INT, joining_date STRING)")There is one another way to create a table in the Spark Databricks using the dataframe as follows:

df= spark.read.format("csv").option("inferSchema","true").load("/FileStore/tables/Order.csv")

df.write.saveAsTable("OrderTable")In this code snippet first we have loaded the data in the dataframe and then we are saving the dataframe as a table or writing dataframe as table.

Creating a External table in Databricks

Creating a external or unmanaged table in the spark Databricks is quite similar to the creating external table in HiveQL. You can use the following SQL syntax to create the external table.

spark.sql("""CREATE TABLE OrderTable(name STRING, address STRING, salary INT) USING csv OPTIONS (PATH

'/FileStore/tables/Order.csv')""")Here you can see that while creating the table you are providing the path of the file as well.

Viewing the Metadata

To view the list of the databases in the Azure Databricks we can use the following SQL operation.

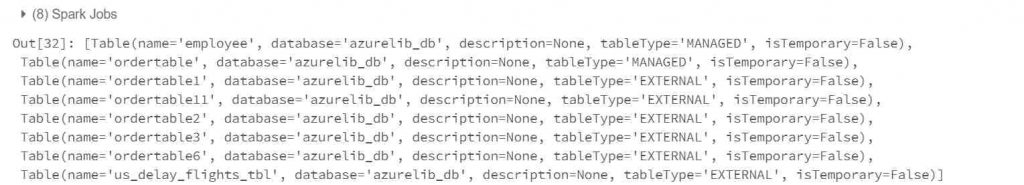

spark.catalog.listDatabases()To view the list of the tables in the Azure Databricks we can use the following SQL operation.

spark.catalog.listTables()

To view the list of the columns in a table in the Azure Databricks we can use the following SQL operation.

spark.catalog.listColumns("table_name")Apache Spark Official Page Link

Final Thoughts

In this series of the Azure Databricks Spark tutorial we have covered the Apache Spark SQL functions. We have learned how to create managed tables and unmanaged tables in databricks. I have also explained what are the advantages of using the spark sql over using the spark operations.

- Azure Databricks Lesson 1

- Azure Databricks Lesson 2

- Azure Databricks Lesson 3

- Azure Databricks Lesson 4

- Azure Databricks Lesson 5

- Azure Databricks Lesson 6

- Azure Databricks Lesson 7