Are you looking out for help on how to call a pipeline from another pipeline in the Azure data factory or maybe you are looking for help on how you can pass parameter from one pipeline to another pipeline in the Azure data factory or adf. You may also be wondering on understanding how to use the execute pipeline activity in the Azure data factory. In this article I will take you through a step-by-step process on how you can call one pipeline from another pipeline and how you can use execute pipeline by passing the parameter from one pipeline to another pipeline. So without wasting any time let’s get into the process of learning how to use execute pipeline activity.

Create two pipeline

Add execute pipeline activity in the first pipeline

In the execute pipeline activity under the invoke pipeline select the second pipeline name.

How to use execute pipeline activity in Azure data factory

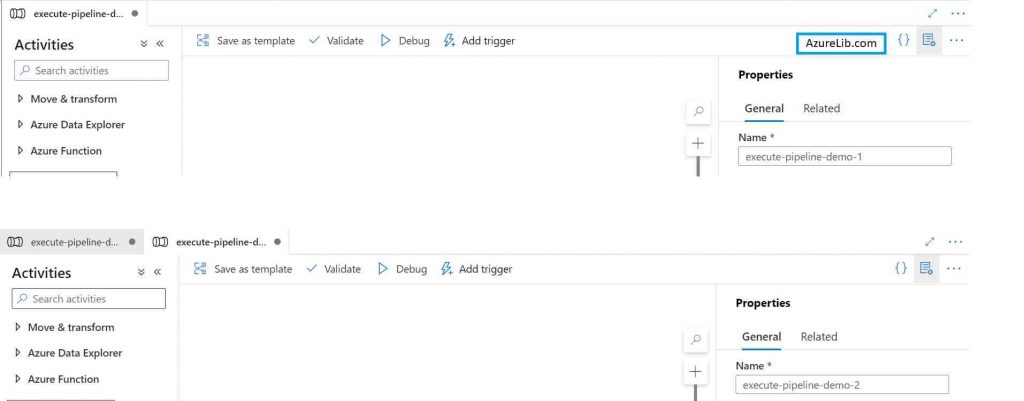

- Let’s go to the Azure data factory account and create one pipeline I am giving the default name execute-pipeline-demo-1. You can give the name as per your choice.

- You can have multiple activities inside this pipeline as per your business needs. For demo purpose here I am not adding any other activity and simply using this pipeline to call another pipeline.

- Create one more pipeline and give the name execute-pipeline-demo-2, you can choose the name as per your need.

- In the second pipeline, I am adding a one lookup activity which will just go to the database to pull off the record count. I am adding this activity just for demo purpose you can have meaningful activities based on your business logic.

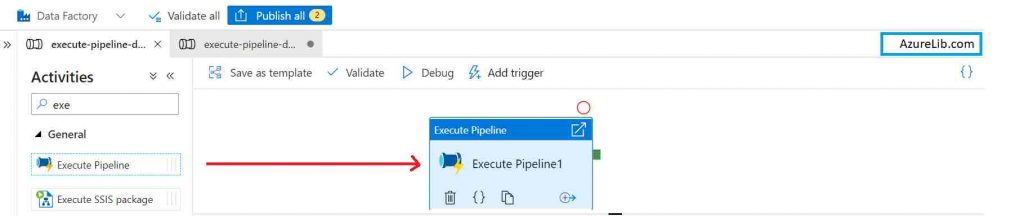

- Come back to the pipeline one (execute-pipeline-demo-1) from which we want to call the second pipeline (execute-pipeline-demo-2). Go to the activity search box and type execute. You will see the execute pipeline activity in the bottom result pan, drag and drop this execute pipeline activity to the Designer tab.

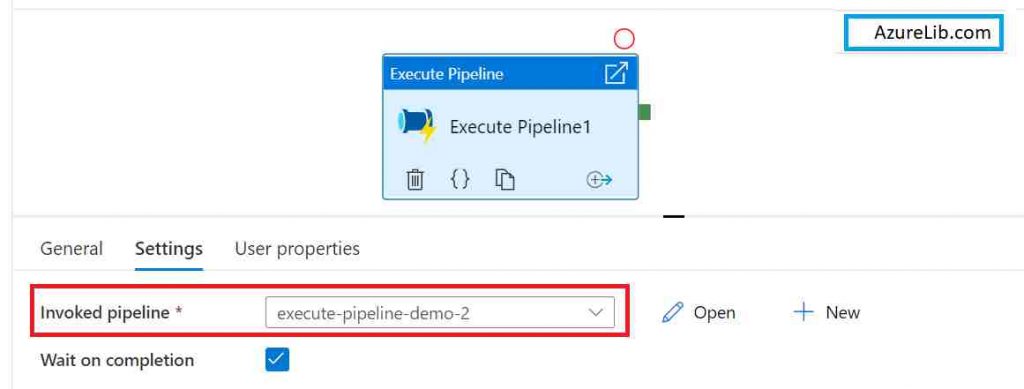

- In the execute pipeline activity, there you will see a property name ’Invoked pipeline’ under the setting tab. It has drop down box where you will select the pipeline which you want to call. Here you will see all the pipeline available in your Azure data factory account. Out of that I am just selecting our second pipeline (execute-pipeline-demo-2). You can select any other pipeline based on your need.

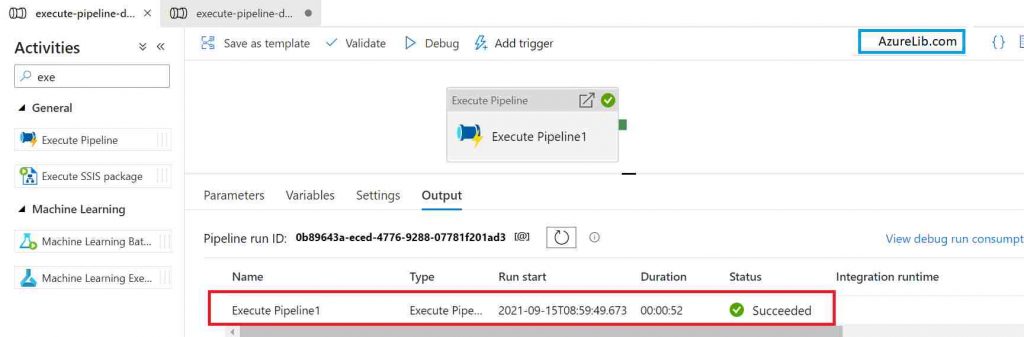

- Your pipeline is ready to rock now, Just go to the debug tab and click on debug the pipeline and you can see that it is calling both the pipelines.

How to pass the parameter from one pipeline to another point pipeline with example

- Go to the Azure data factory account and create the first pipeline. Give it a name like execute-pipeline-parameter-demo-1. It is not mandatory to give this game you can choose the name as per your requirement.

- Create one more pipeline, this time give the name execute-pipeline-parameter-demo-2. Add activity as per your business need, I am adding the lookup activity for our demo purpose.

- Create two pipeline parameters named ’schemaName’ and ’tableName’. Pass this pipeline parameter inside the lookup activity.

- Now come back again to the first pipeline (execute-pipeline-parameter-demo-1). Go to the activity search box and type execute pipeline you will be able to see the execute pipeline activity into the result. Drag and drop execute pipeline activity into the pipeline designer tab.

- Go to the setting tab of an activity where you will see the field name Invoked pipeline. Select the pipeline which you want to call.

- The moment you select the second pipeline you will see the two parameters it is asking to set. Pass the values of these two pipeline parameters ’schemaName’ and ’tableName’.

- Provide the parameters value here and this parameter will get passed to the second pipeline when it gets execute.

That’s how you can pass the parameter from one pipeline to the another pipeline.

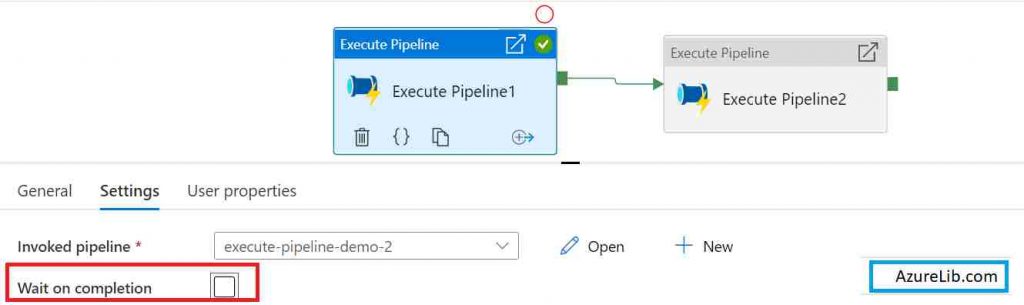

What is wait on completion in execute pipeline activity?

Execute pipeline activity is used to execute the pipeline from another pipeline. Wait on completion in execute pipeline activity Is a configuration parameter which is used to make the pipeline execution either parallel or sequential.

For example if you are having multiple execute pipeline activity within a pipeline, and you want to ensure that the once a execute pipeline activity started, the other activities should only get started once this invoked pipeline get completed i.e. you wanted to run the execute pipeline activity in the sequential manner. You can achieve this functionality by setting the wait on execution property to true.

This will make sure that the next activity after the execute pipeline activity will not get executed until and unless this invoked pipeline gets finished first.

In case you are not worried about sequential execution of the activities then you can uncheck this wait on completion checkbox and now the invoked pipeline will run in parallel along with the next activity after it.

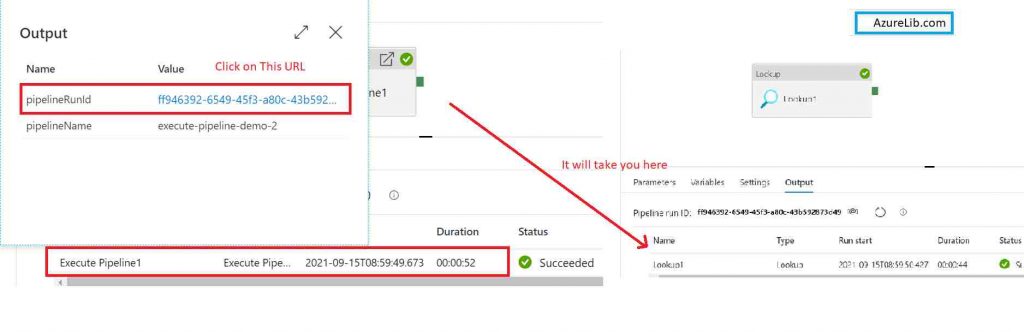

How to check the output of execute pipeline activity in Azure data factory.

In general you go to the output tab of the main pipline and there you will see the output of all the activities but if you have one execute pipeline activity then you probably want to see the output of this child or invoked pipeline as well.

To check the output of this invoked pipeline by execute pipeline activity, click on the output of the execute pipeline activity. Once you click it there you will see an URL. Click on this URL and this will take you to the invoked pipeline output tab and there you will see the output all the activities of the invoked pipeline.

What could be the business use cases or scenarios where you need to call a pipeline from another pipeline

- You wanted to chain two different pipeline flow together. For example one pipeline is pulling the master data and other pipeline is pulling the entity data and you want to run both of them in sequence.

- You wanted to split the existing pipeline into two or more pipeline, because keeping all the logic in one pipeline making it very big to handle and maintain.

- There could be scenario where you wanted to do the nesting of foreach activity, but unfortunately it isn’t allowed in the Azure data factory to have nested foreach activity. Hence to solve this problem what you can do is you split the pipeline into two. Both the pipeline keeps one foreach activity. Now call the second pipeline from the first pipeline using the execute pipeline activity.

Microsoft official documentation for the Execute pipeline activity you can see here : Link

Recommendations

Most of the Azure Data engineer finds it little difficult to understand the real world scenarios from the Azure Data engineer’s perspective and faces challenges in designing the complete Enterprise solution for it. Hence I would recommend you to go through these links to have some better understanding of the Azure Data factory.

Azure Data Engineer Real World scenarios

Azure Databricks Spark Tutorial for beginner to advance level

Latest Azure DevOps Interview Questions and Answers

You can also checkout and pinned this great Youtube channel for learning Azure Free by industry experts

Final Thoughts

By this we have reached the end of the article. In this article we have seen how we can call one pipeline from another pipeline. We have also seen how you can pass the parameter in the second pipeline from the first pipeline. I have also demonstrated about executed pipeline activity. Along with this I have also explain execute pattern activity step by step using the real example. Hope you have enjoyed this post and you have learned couple of new things today.

Please share your comments and suggestions in the comment section below and I will try to answer all your queries as time permits.

Thank you for reading. See you in next insightful article.