Welcome to my series of Azure Data Factory tutorial. In this ADF tutorial series I would going to take you through step by step guide to learn and master Azure data factory with practical examples. I will explain every concept with practical examples which will help you to make yourself ready to work as Azure Data engineer. In this series of Azure Data Factory tutorial for beginner I will teach you how to create ADF pipeline, how to schedule it, how to manage deploy and monitor it. I will each and every minute details needed to get the expertise in Azure Data Factory. Let’s start learning without wasting any time now.

Prerequisite for Azure Data Factory Tutorial:

There is as such no perquisite for this tutorial guide. However I would have few recommendation:

- Basic computer knowledge.

- Understanding of basic SQL language would be added advantage.

- Lesson 1: Azure Data Factory Concepts

- Lesson 2: Azure Data Factory Studio Overview

- Lesson 3: Azure Data Factory Create Your First Pipeline

- Lesson 4: ADF Linked Service In Detailed

- Lesson 5: Azure Data Factory – Copy Pipeline

- Lesson 6: Add Dynamic Content- Expression Builder

Resource requirement for Azure Data Factory

- Active Azure Subscription. (if you don’t have one, don’t worry. I will provide a step by step guide to create your first free azure account for use. Link is attached here : Azure Free Account Step by Step guide)

We will start with the overview of azure data factory and then go deep inside each and every topic to understand it better along with hands on example:

What is Azure Data Factory

Azure Data factory is the data orchestration service provided by the Microsoft Azure cloud. ADF is used for following use cases mainly :

- Data migration from one data source to other

- On Premise to cloud data migration

- ETL purpose

- Automated the data flow.

What are elements of Azure Data Factory

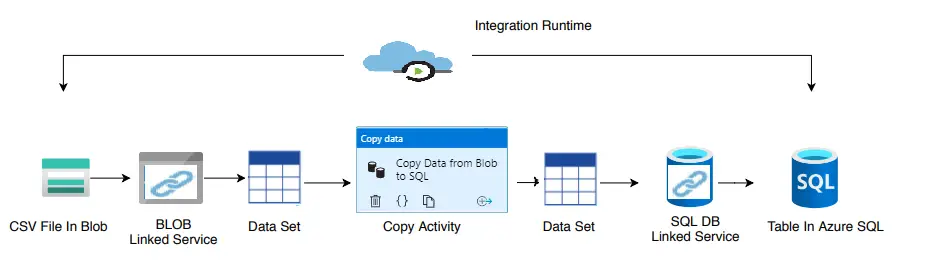

Data Source : It is the source system which contains the data to be used or operate upon. It could be anything like text, binary, json, csv type files or may be audio, video, image files, or may be a proper database.

Linked Service : You could be think of it as the key (like key to open lock) which helps to authenticate the access to the data source. For example in case of database as data source, linked service would be created using the db user name and password. When you need to connect to data sources, it would be done using the linked service.

DataSet : Dataset is the representation of the data contains by the data source. For example the Blob binary data set is representing the binary file in the azure blob storage. For creating the dataset, linked service need to created first. Dataset through linked service access the data in/out from the data source.

Activity: Acitvity represents the operation that need to be performed on the data. There many activities available in azure data factory. Most common activity is the Copy Activity , it represent that, data has to be copied from source to destination.

Pipeline : It is the logical grouping of the activities to perform certain tasks. It helps in creating the logical/sequential flow of the task need to be performed to achieve certain goal.

Integration Runtime : It is the powerhouse of the azure data pipeline. It provides the compute resource to perform operations defined by the activities.

Why you should need to learn Azure Data Factory

Azure Data factory is the service provided by the Microsoft Azure to do the ETL work, data migration, orchestration the data workflow. Hence if you are planning to build your profile as a Azure Data engineer hence this would be one the mandatory service which you must be aware of. Every organization who are thinking of creating data lake solution or moving towards the cloud, is using ADF in one or other way.

Real World User Case Scenarios

- There is multiple application which is storing the data in the on-premise database or data warehouse solution. Organization has decided to move over cloud and as a part of it you have to move all the data and workload over the cloud data warehouse solution.

- You have data available in the form of CSV file in the Azure blob storage and you have to move all these files data to Azure SQL database which would be later on use by some down stream application.

- You may want to create a workflow between the Azure cloud and other cloud solution like AWS or snowflake.

- Create a workflow which involves different services like Databricks, Azure function may be Hive, Map reduce or any other python or powershell script.

Let’s start learning the Azure data factory by practicing it. So for this our first step would be to create the Azure data factory account. I will take you through step by step method to create your first Azure Data Factory account.

How to create Azure Data Factory Account

For creating the Azure data factory account, log in to Azure portal. In the search box enter data factory and in the result pan you will see the data factory. Just click on that and then click on the ‘+’ icon or you can click on ‘New’ link to create your first Azure data factory account

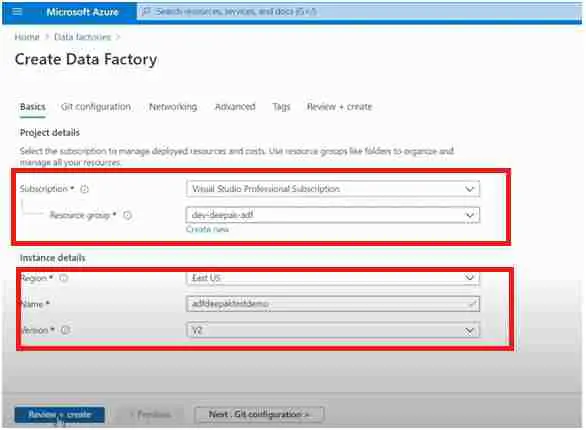

Click on that and you will be welcomed with the following screen.

It will ask the basic details for creating an Azure data factory account, that is, the subscription under which the cost of adf will come. You have to provide the name of your account which is to be unique, it will also ask about the region. Region defines the region in which the metadata about the data factory will get saved, not the data.

You also asked to choose the ADF version, by default ADF version 2 selected you should choose ADV v2 only as it is the latest one and old one ADF v1 is deprecated.

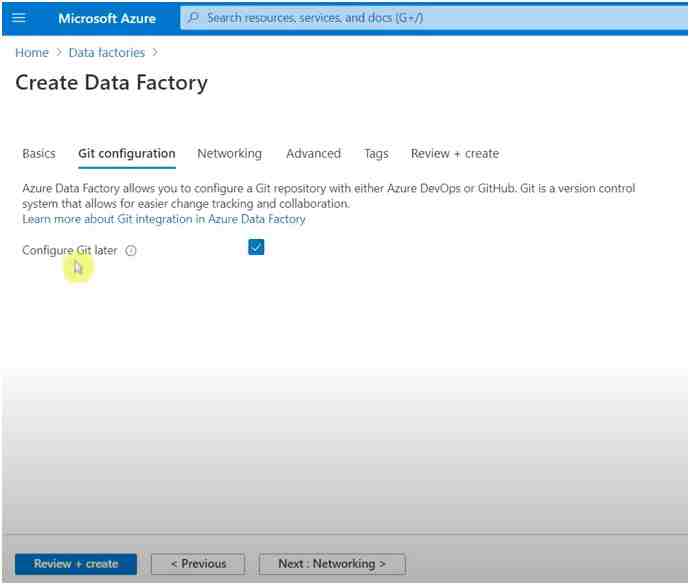

In the next screen it is asking you to do the GIT configuration. Basically GIT configuration has been needed for maintaining the changes and for keeping the track of the adf changes. Hence for now you can skip it. Later once you get used to ADF we will revisit it after a couple of lessons.

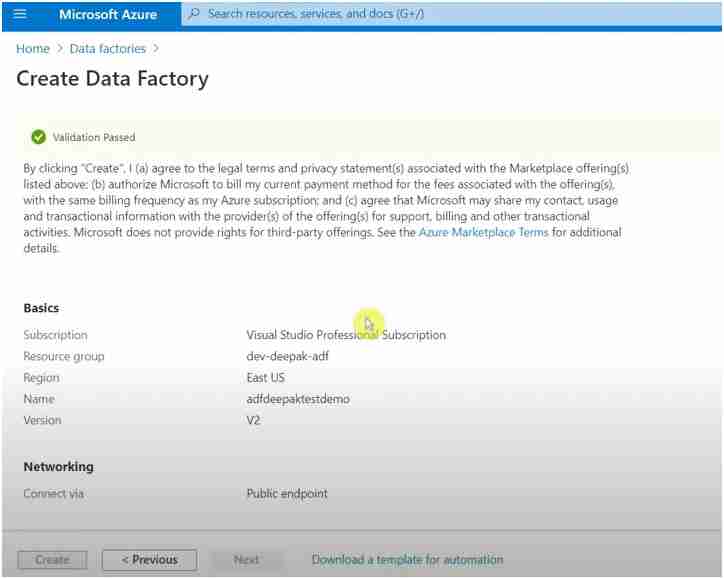

Next is the networking tab, there you won’t have to make any changes, you can keep the default.

Later on it will ask you to add the tags, it’s also optional, if you want to give some tag value you can give it otherwise skip it.

Finally you will reach the last screen where it will ask you to review all the configuration which we have set and you can click on create.

It will take some time, maybe a few seconds to create and once you get the notification for success you are good to go.

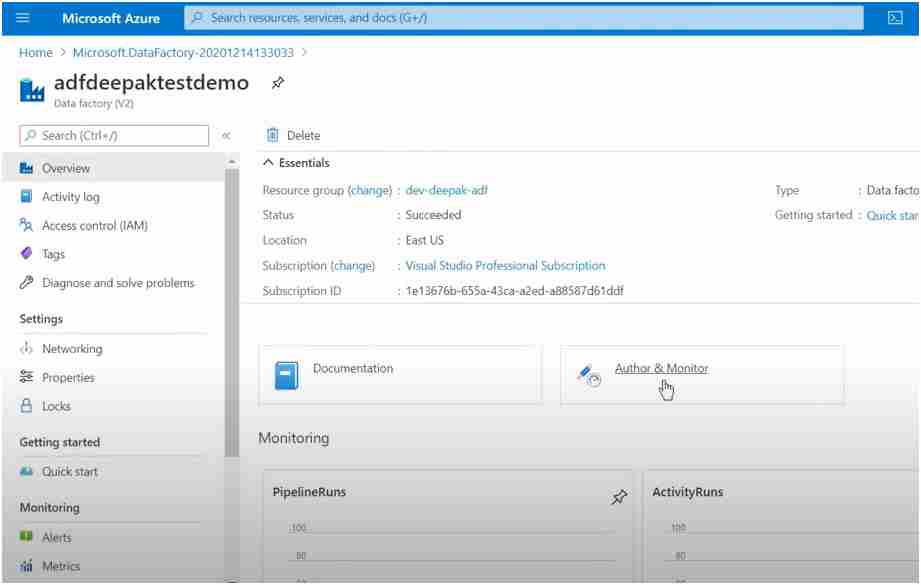

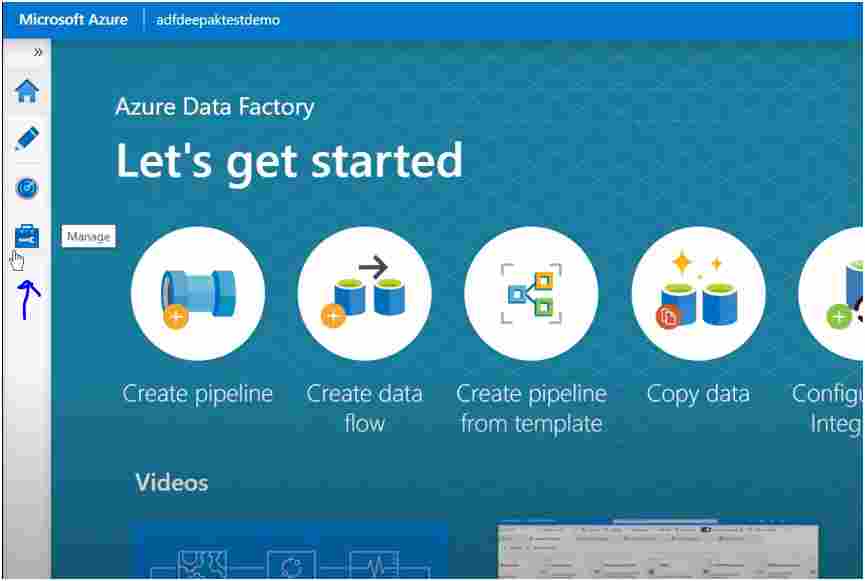

Just go to the ADF account you created recently you will see the screen as below on the right inside pan in the bottom you will see author tab just click on this and it will take you to the Azure Data Factory studio.

Hence at last now you are ready to rock the ADF world. You have an ADF account created and now we can start developing your first Azure data factory pipeline.

In the next tutorial we will see how you can create your first pipeline along with a different component like data sets and Link Services.

Overview of Azure data factory : Azure Data Factory

How to create Azure data factory account

How to create Linked service in Azure data factory

What is dataset in azure data factory

Lookup Activity in ADF pipeline

Create Linked Service to connect Azure SQL Database in Azure Data factory

Create dynamic dataset in ADF for Azure Sql Db

Copy activity Azure data factory with example

Microsoft Official Documentation for Azure data factory link

I am keep posting couple of topics meanwhile.

Take your time in going through each of the topic and don’t forget to do the hands on practice , as that is the most important thing to do. Meanwhile I am working on put up couple of more topics.

Final Thoughts

Azure data factory is one of the much needed skills for data engineer belonging to azure world. I have worked to aggregate all my knowledge and stuff to provide you the precise knowledge needed to excel quickly and efficiently.

By going through all of these azure data factory tutorial topics will give you complete overview of the azure data factory and how you can utilize it.

- For Azure Study material Join Telegram group : Telegram group link:

- Azure Jobs and other updates Follow me on LinkedIn: Azure Updates on LinkedIn

- Azure Tutorial Videos: Videos Link