Are you looking to understand what is the foreach activity in the Azure Data Factory or maybe you are looking for various use cases and scenarios where you can use the foreach activity? There are situations where foreach activity is the only way to achieve the business requirements. In this tutorial, we will go through step by step where I will train you on how you can use the foreach activity with blob storage in iterating over all the files. I will also demonstrate how you can use foreach activity along with getmetadata activity. We also go through the examples where we will combine the Foreach activity with lookup activity and copy activity. Let’s get into each of these details step by step with the implementation.

What is the foreach activity in the Azure Data Factory?

Foreach activity is the activity used in the Azure Data Factory for iterating over the items. For example, if you have multiple files on which you want to operate upon in the same manner than, there you could use the foreach activity. Similarly assume that you are pulling out multiple tables at a time from a database, in that case, using a foreach loop you can reuse the logic of pulling the data from one table, on the list of tables. Hence there could be multiple scenarios where you want to repeatedly execute the same logic (sequence of activities in ADF) on the list of items. Foreach could be the best and recommended way to achieve the goal in these circumstances.

Foreach activity to ADF would be kind of similar to what for loop or while loop in various programming languages like C, C++, Python, Java, Scala and many others.

How to access files in a folders using the foreach activity in Azure Data Factory with Example

Use Case Scenario : Assume that there is multiple files in a folder. You want to copy these files into another folder with a new name.

Logical Plan:

- Create a pipeline with the getmetadata activity. This getmetadata activity will be used to pull the list of files from the folder.

- Add Foreach activity after the GetMetaData activity where the output of the GetMetaData activity will be passed to the Foreach activity as input.

- Foreach activity will be configured to iterate over the arrays of files returned from GetMetaData activity.

- Inside the Foreach activity use the copy activity to copy the current file into destination folder.

Implementation:

Now let’s see the step by step process to implement over logic to move the list of files from one location in blob storage to another blob storage folder location.

- Create a pipeline in the data factory. Give the name whatever you like, I am choosing the name as for-each-activity-example.

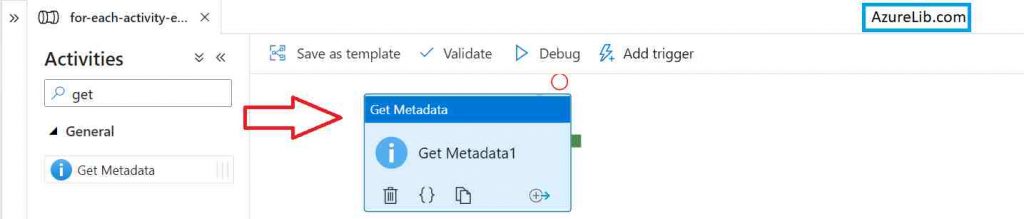

- In the activity search box type getmetadata you will see the getmetadata activity. Drag and drop the activity into your pipeline.

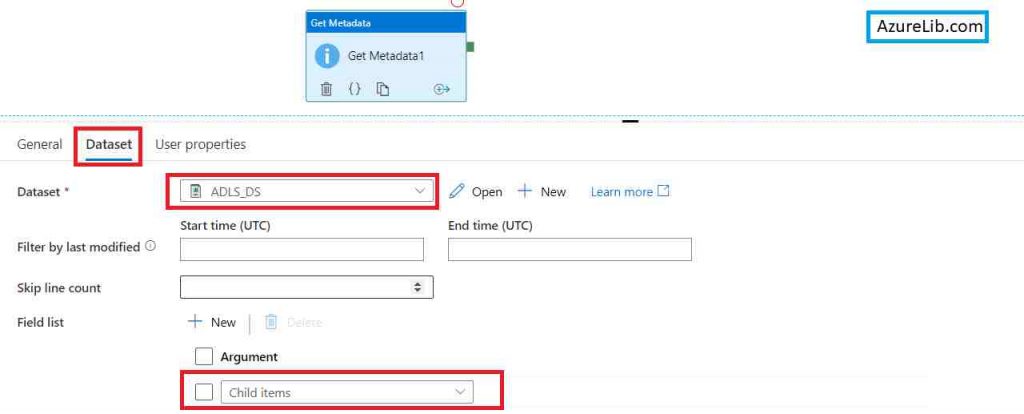

- Use the data set which is pointing to our source Azure blob storage folder location. In case if you haven’t know how to create the data set for Azure blob storage please visit this link to create the Azure blob storage. Link

- Now you can see that we are pointing to our source folder location. Inside the getmetadata activity under the data set tab add the Field list named child items. This will return the list of all the files which is available inside this folder. Our getmetadata activity is ready to pull the list of all files.

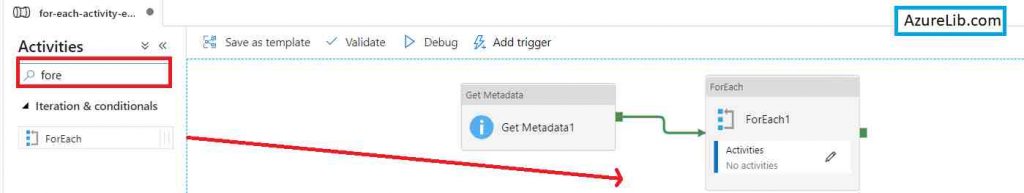

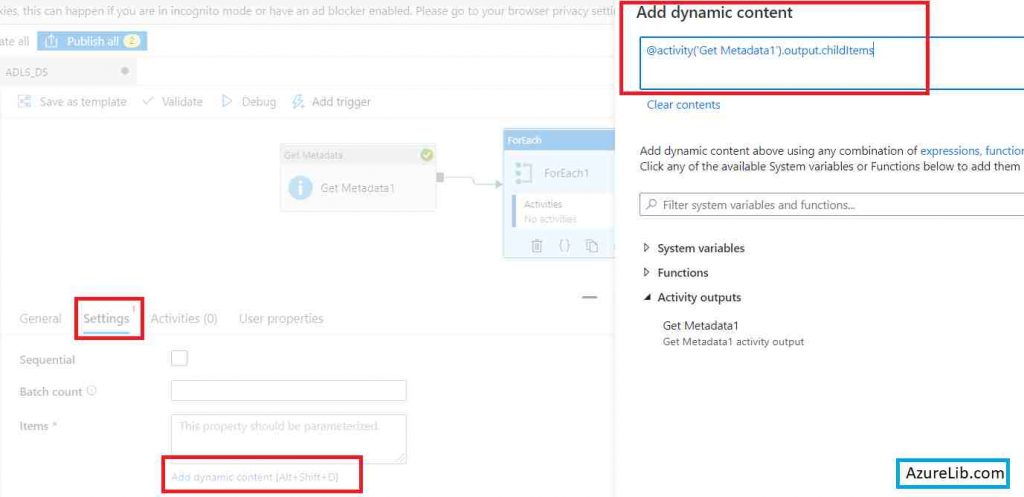

- Go back to the activity search box and this time search for the foreach activity drag and drop the for each activity into your pipeline designer tab and attach the output of getmetadata activity to the input of foreach activity.

- Configure the foreach activity click on add dynamic content and use the expressions to get the output of getmetadata activity and list of files. This will be an array of all the files available inside our source folder which we wanted to iterate over upon.

- Click on foreach activity pencil icon to edit and now you will be inside the foreach activity. Here again, go back to your activity search box and this time search for copy activity drag and drop copy activity inside the foreach activity.

You can access the current item foreach iteration by using @item().

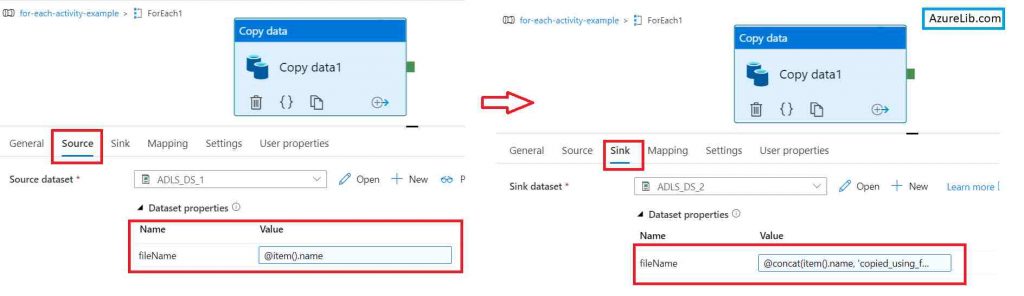

- Inside the source tab of copy activity, select the dataset. This will again be the same Azure Blob storage dataset pointing to the source folder and here you will pass the filename as a parameter. Your file name would be the current filename (@item()) on which we are doing iteration.

- In the sink tab, use another blob storage dataset that is pointing to our destination folder. Here use the parameter to pass the new file name to the dataset.

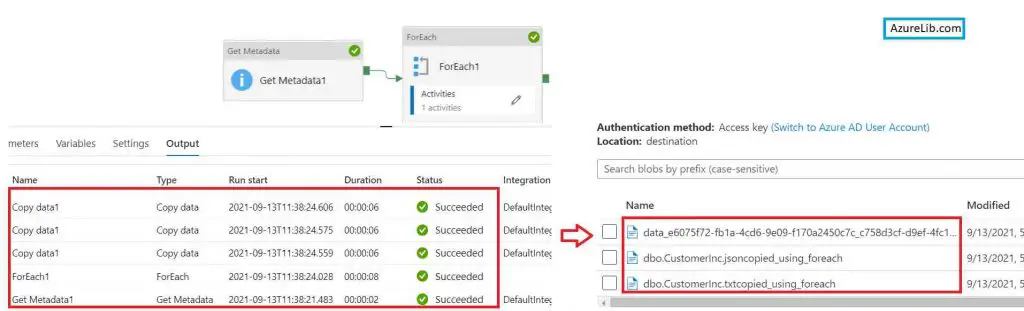

That’s it. Now just come back to the pipeline and click on debug to run and check everything is working fine.

We have successfully created the pipeline using getmetadata, foreach and copy activity to copy the data from one folder location to another folder location with a new name.

How to run foreach activity in Azure Data Factory in Sequential Manner

Azure data factory foreach activity is meant to run in parallel so that you can achieve the results fast however there could be a situation where you want to go sequentially one by one rather than running all the iterations in parallel.

Hence you must be thinking about how we can ensure that our for each activity runs all the iteration in a sequential manner.

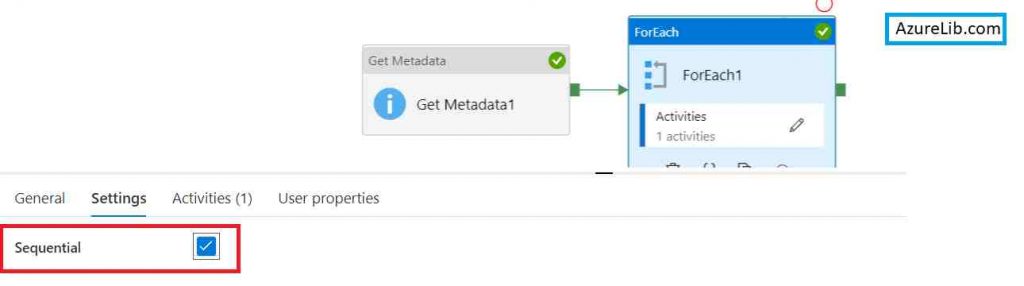

It can be done by just enabling one checkbox. You have to go to the for each activity, under the settings there is a checkbox named Sequential. Just check this checkbox and that will ensure your pipeline now will run in a sequential manner.

How to set a number of parallel execution counts in foreach activity of the Azure data factory?

You must have used the Azure data factory foreach activity for doing the repetitive work on arrays of values. However, you may want to ensure that the number of the parallel paths should be some specific number. You can set the number of parallel execution in for each activity just by simply changing one value.

Go to the foreach activity and under the setting tab there is a batch count field. You can just enter the number of parallel paths you want to set for foreach activity.

For example, if you want to set 10 parallel execution for foreach activity iteration then you can set 10 here.

What is the maximum number of parallel execution count for foreach activity?

In the foreach activity under the setting tab, there is a batch count text box. This batch count value decides the number of parallel execution paths for foreach activity.

The maximum value for ADF foreach batch count is 50.

What is the minimum number of parallel execution or batch count value for foreach activity?

1 is the minimum value for the batch count. This will convert the foreach activity from parallel to sequential

What is the default number of parallel execution or batch count value for foreach activity?

5 is the default value for batch count of foreach activity.

How to implement nested foreach activity in Azure Data Factory?

Nesting of foreach activity in Azure data factory is not allowed. You cannot directly keep one foreach activity inside another foreach activity.

However, there could be a workaround. When you will have these kinds of nesting foreach situations, you can create two separate pipelines where both the pipelines having their own foreach activity and you will call the second pipeline from inside the foreach activity of the first pipeline.

How to pass JSON array in foreach activity of Azure data factory?

Passing the JSON array in the foreach activity is not different than passing and normal array in the foreach activity. The only difference it will create on how you will go to access the JSON object.

So basically your JSON array would be an array of JSON objects, when you have to use these objects then you have to use the dot operator for accessing the different properties of the JSON object.

For example if you have a JSON array like:

[

{

"name": "data_e6075f72-fb1a-4cd6-9e09-f170a2450c7c_c758d3cf-d9ef-4fc1-9fd1-0d4ae4480a34.txt",

"type": "File"

},

{

"name": "dbo.CustomerInc.json",

"type": "File"

},

{

"name": "dbo.CustomerInc.txt",

"type": "File"

}

],

Then you can access the values of each JSON object properties using dot (.) operator like:

@item().name

@item().type

Microsoft Foreach official documentation

Recommendations

Most of the Azure Data engineer finds it little difficult to understand the real world scenarios from the Azure Data engineer’s perspective and faces challenges in designing the complete Enterprise solution for it. Hence I would recommend you to go through these links to have some better understanding of the Azure Data factory.

Azure Data Engineer Real World scenarios

Azure Databricks Spark Tutorial for beginner to advance level

Latest Azure DevOps Interview Questions and Answers

You can also checkout and pinned this great Youtube channel for learning Azure Free by industry experts

Final Thoughts

In this tutorial, we have learned what is for each activity in the Azure data factory. We also saw how we can implement the foreach activity in our pipeline. We’ve also seen the example of iterating over a number of files within the folder location. I have also taken you through, how you can enable and disable the parallel execution of foreach activity. We have a batch count parameter to set the number of parallel execution paths for foreach in the Azure data factory. Lastly, we have seen how to handle the JSON array in the foreach activity.

I hope you have enjoyed this article and have learned a great thing today. please let me know if you have any suggestions comments all questions I will try to respond to all your queries as early as possible.