Like all other Azure pay as you go service you might be thinking that calculating the price for the azure data factory would be a straight forward task. However it is not. There are lot of things involved while calculating the cost for a pipeline run in adf. In this article I will explain each and each every details needed to understand the azure data factory pricing model and after this you will probably saving $$$ for your organization.

Read / Write: $0.50 per 50 000 modified entities

Monitoring: $0.25 per 50 000 run records retrieved

Data Pipeline Self hosted :

Orchestration $1.50 per 1000 runs

Data Movement Activities : $0.10 per DIU-hour

Pipeline Activities : $0.002 per hour

External Activities : $0.0001 per hour

Data Pipeline Azure :

Orchestration $1 per 1000 runs

Data Movement Activities : $0.25 per DIU-hour

Pipeline Activities : $0.005 per hour

External Activities : $0.00025 per hour

Additional Cost

Per Inactive Pipeline: $0.80 per month

Outbound Data Transfer: $0.05 – $0.087 per GB

Data flow debugging and execution

Compute optimized : $0.199 per vCore-hour

General Purpose : $0.268 per vCore-hour

Memory optimized : $0.345 per vCore-hour

SQl Server Integration Service

Standard D1 V2: $0.592 per node per hour

Standard E64 V3: $18.212 per node per hour

Enterprise D1 V2: $1.665 per node per hour

Enterprise E64 V3: $35.372 per node per hour

Mostly asked Azure Data Factory Interview Questions and Answers

We will look the different pricing model and explain you in detail so that you can get more information on estimating your products when you start working with your Azure data factory pipeline

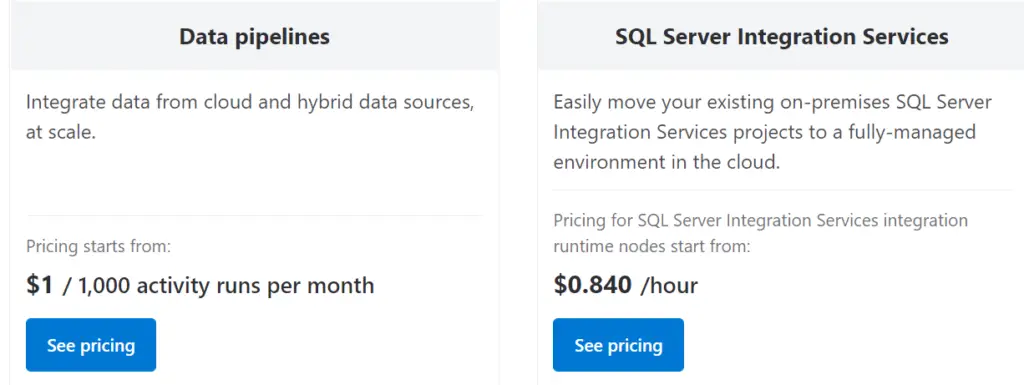

Basically azure data factory pricing is divided into two parts one is the pipeline and other is SQL service integration

You might be thinking it’s very simple as it is saying $1 for 1000 runs and it starts from 84 cents per hour. However it is not that’s straight forward even though it says it starts from those prices but eventually you end up with paying lot of other prices.

To understand the price in better way lets divide into four model:

- Azure Data Factory Operations

- Data Pipeline Orchestration and Execution

- Data Flow Debugging and Execution

- SQL Server Integration Services

Azure Data Factory Operations

Read / Write: $0.50 per 50 000 modified entities

Operations: Create, Read, Update, Delete

Entities: Datasets, Linked Services, Pipelines, Integration Runtimes, Triggers

Monitoring: $0.25 per 50 000 run records retrieved

Operations: Get, List

Run Records: Pipeline Runs, Activity Runs, Trigger Runs, Debug RunsThis include all the operations which you do inside the azure data factory. For example when do read, write , update or delete this will cost you. When you use dataset , linked services, pipelines, Integration runtimes etc this all will charge you. These are not huge cost overall but still its a cost that you have to pay for using adf.

When you have large team who do lot of development and monitoring this cost can turn into the significant cost.

Data Pipeline Orchestration and Execution

This is one of the most painful area from the azure data factory cost perspective. You have two choices to run your adf workload. Either you can use the azure provided integration runtime or you can have your self hosted environment and there you want to run the pipeline. Here the orchestration and debugging cost varied on your type of integration runtime used.

Data Pipelines on Self-Hosted Integration Runtime :

Orchestration $1.50 per 1000 runs

Data Movement Activities : $0.10 per DIU-hour

Pipeline Activities : $0.002 per hour

External Activities : $0.0001 per hourWhen you’re orchestrating things, every time you’re running an activity, again, it’s a small cost involved. $1. 50 per 1, 000 runs for running activities triggers debug runs. The real cost comes when you’re looking at the activities inside of those pipelines. Again, we’re digging into the details here. For execution, there are three types of activities inside a pipeline that you can run. The first is a Data movement activity, which is basically the copy data activity. As you can see, this is where the largest cost is inside of a data pipeline. So 10 cents per DU hour. that’s the heavy lifting. that’s the core of azure data factory, and that’s what you’re paying the most for inside of that.

Then you have something called pipeline activities. That could be something called hookups or get meta activities, things you’re running inside a pipeline. Last category is external activities. Those are the activities you’re kind of orchestrating, spinning them up, and running somewhere else. That could be inside of azure databricks, or running a stored procedure on on-premises sql server. Hence you do need to understand which activity you’re running to get the right estimates for that. So these are the costs for self-hosted.

Something important to bookmark , a great channel for learning exciting stuff about Microsoft Azure. Have a look and keep in your subscribed list.

Data Pipelines on Azure Integration Runtime :

Orchestration $1 per 1000 runs

Data Movement Activities : $0.25 per DIU-hour

Pipeline Activities : $0.005 per hour

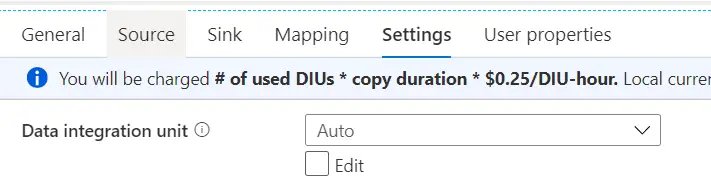

External Activities : $0.00025 per hourCost for orchestration is a little lower, but the cost for execution is a little higher. it’s not significant, but there is a small increase in cost for execution, and that’s basically just because azure takes care of all the infrastructure for you . you’re using their compute services, and that’s the cost you’re paying for that. You might be wandering what is per DU hour and what does that really mean when running azure data factory pipelines. Inside of copy data activity, if you click on the settings tab, you can see that we set the data integration units, DU the default setting for that is auto.

Do any of you know what the number is when you set it to auto? Auto means that it will scale it up for you, if necessary. Azure data factory can take care of scaling up to make sure things are running as quickly and efficiently as possible, but when you set it to auto, it means that the default number, the lowest number of data integration units it will use is 4. If you want to change that setting, you can go ahead and set the minimum number to two, and this has a big effect on the cost for the copy data activity inside of azure data factory because, if you’re leaving everything at auto at the default, you’re paying for four times the time that copy data activity is running.

I recommend to all of you here is you go ahead and look at the copy data activity settings and start by changing it to two. If that is not enough compute power, you can always scale it back up. But if two is enough, you’ve basically cut the cost of that copy data activity in half. Just be aware of that, that auto defaults to four as a minimum. two is what you can set it to on your own. Little trick there just to make sure you’re keeping the cost as low as possible for your own solutions.

Additional Cost:

Additional Cost

Per Inactive Pipeline: $0.80 per monthIf you have inactive pipelines, there’s a little cost associated with that. You need to tell your developers, if you don’t use this anymore, clean it up, get rid of it, and delete it because otherwise it’s going to cost us a little bit of money.

Additional Cost

Outbound Data Transfer: $0.05 - $0.087 per GBIf you’re moving data from an azure region to outside of azure, there is a small cost for bandwidth and network usage, that goes for all the azure services, but this is going to show up as an additional little row on the invoice. In case if you’re wondering what that bandwidth networking is, that means you’re moving data out of the azure region.

Data Flow Debugging and Execution

This is a different pricing model again, so this completely depends on the compute type you’re using to run your data flows on. Data flows are running on azure databricks clusters behind the scenes, and you can optimize that for compute optimization, general purpose, and memory optimized. Based on the cluster that you are choosing to run this on, it has a different price point.

Compute optimized : $0.199 per vCore-hour

General Purpose : $0.268 per vCore-hour

Memory optimized : $0.345 per vCore-hourJust make sure you’re scaling it appropriately, and always start with the lowest and see how the performance is looking overall. You should do some testing on that before you start scaling it up unless you have money to burn.

SQL Server Integration Services

Standard D1 V2: $0.592 per node per hour

Standard E64 V3: $18.212 per node per hour

Enterprise D1 V2: $1.665 per node per hour

Enterprise E64 V3: $35.372 per node per hourThis also different model in itself. In this case, sql server integration services, if you’re doing the lift and shift, you are creating a little set of virtual machines behind the scenes. When you set up the integration runtime for sql server integration services, you have to choose the virtual machine size, and you can choose anywhere from the cheapest, lowest, with the one core, 3. 5 GB of ram. 60 cents per node per hour. If you scale it up to one of the largest virtual machines, 64 cores, 400-plus gigs of ram, you’re starting to pay a little bit more for that. then you’re looking at $18 per node per hour, and this is for the standard edition of sql server.

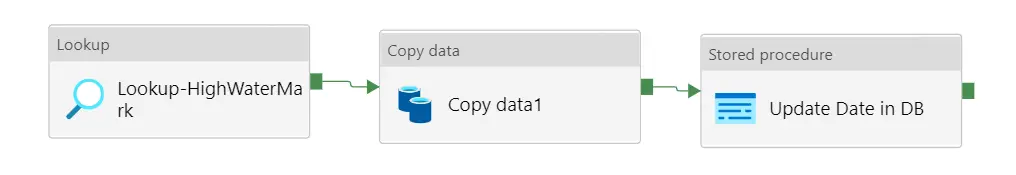

Example to calculate the price of Azure Data factory Pipeline

Lets calculate the price for the below mentioned pipeline :

Activity Run : 3 * ($1/1000) = $ 0.003

Lookup : 3 min * ($ 0.005/60) = $ 0.00025

Stored Procedure: 2 min * ($ 0.00025/60) = $0.000008

Copy activity : 10 min * ($ 0.25/60)* 4 DIUs = $0.167

Total Cost per Pipeline Run = $0.17

Total Cost Per 30 Days daily run = $5.1

Total Cost Per 30 Days Daily run for 100 Tables = $501 How to reduce Azure Data factory Pipeline cost

- Remove all unused pipeline as they also cost.

- Set the DIU to minimum value instead of keeping them as auto. (This could reduce the cost by half)

- Reduce the number of activities if possible.

- In case of Sql server integration service choose the node with minimum configuration as possible.

You would also like to see these interview questions for your Azure Data engineer Interview :

Azure Databricks Spark Tutorial

Real time Azure Data factory Interview Questions and Answers

Azure Devops Interview Questions and Answers

Azure Active Directory Interview Questions and Answers

Azure Databricks Spark Interview Questions and Answers

Final Thoughts

Azure data factory pricing calculation is not straight forward task rather than it is uphill task to calculate the price of every pipeline run. There are lot of factors involve in pricing. In azure data factory whatever you do irrespective of how small thing it would, this will add cost to your bill.

Hence its important to learn and understand the pricing model well enough by your developers to build the pipeline in most optimized way.